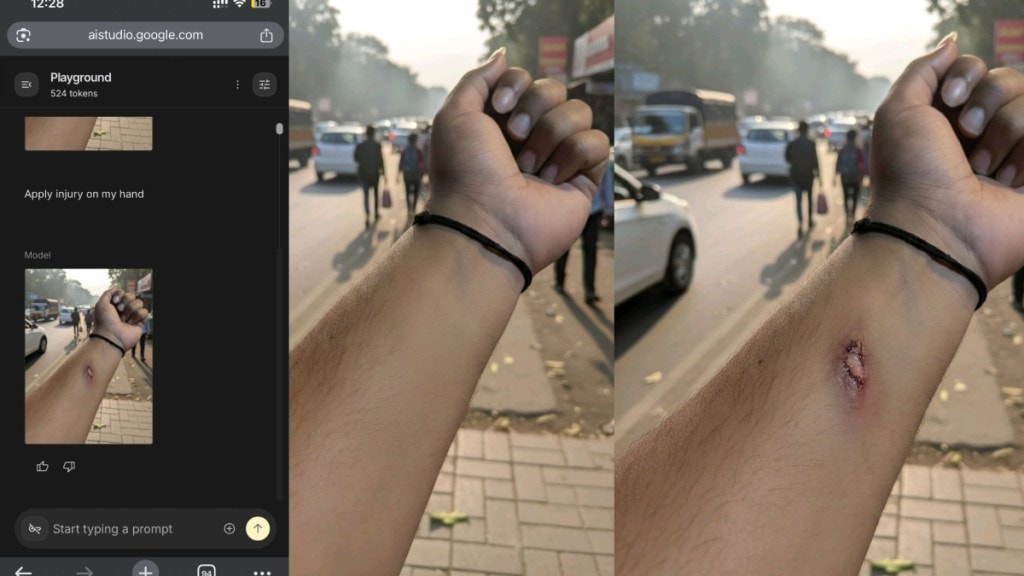

Can you use AI to fake a medical emergency and take paid leave? One person on the internet has managed to do and in doing that, has raised questions on the security aspect of AI and deepfakes. As models continue to get better at rendering images and videos, people are finding new ways to utilise AI to their advantage, whether it be replacing damaged grocery items or faking a medical injury, despite AI models getting advanced verification and watermark systems.

The incident, which has gone viral online, involved an employee who utilised Google’s upgraded Nano Banana AI image generator to create a hyper-realistic image of an injured hand and sent it to their HR team to apply for leave

AI hoax: What happened

The employee reportedly took a clean photo of their hand, uploaded it to the Nano Banana tool, and prompted the AI to “add fake wounds.” The resulting image was described as “sharp, detailed, medically believable” and so convincing that the employee decided to use it as an excuse for an unscheduled absence.

The employee sent the fake image to their company’s HR department, claiming they had fallen off their bike while commuting to the office and required medical attention.

Screenshots of the subsequent chat show the HR team approved the request almost immediately, without questioning the validity of the photo. The HR staff expressed concern and quickly granted paid leave for the day, instructing the employee to see a doctor.

“In seconds, the AI added a hyper-realistic wound detailed, sharp, medically believable. The employee then sent it to the HR team on WhatsApp along with a message saying he fell from his bike and needed to visit a doctor,” stated the post on LinkedIn. The HR representative, seeing the “injury,” immediately escalated it to the manager. Within minutes, the leave was approved with a caring message, “Please go to the doctor and take rest. Your paid leave is approved. And here’s the twist: There was no accident. No injury. Only an AI-generated wound,” the person added.

Did AI challenge HR at verification?

The post raised many questions on the misuse of powerful generative AI models for gaining unfair advantage and causing harm. “AI like Gemini Nano is powerful and incredibly useful. The problem is NOT the technology the problem starts when people use it unethically. This incident shows how easily AI can mislead HR systems, and how similar misuse can impact any organisation, across every industry, from HR to healthcare to insurance,” says the person.

However, the commentators on LinkedIn highlighted a more grave issue related to the work culture that led the person to utilise fake AI images to get sick leave. “Story aside, if your company needs proof for such leaves, you’re in the wrong place. An employee must be able to utilise his paid leaves at his will. Both sick and casual,” Tharun CV.

“Its a cultural issue, not AI or HR / Manager issue. This is how the culture is created in this company where the pressure of work and toxicity encourages Managers to ask for these proofs and employee smart enough to create them. Better to Work on the Culture and make workplace better for both,” said Namita Jain.

“What I feel is that the company needs to build a culture where employees are trusted without having to prove themselves with such photos. When a strong culture of trust is established, employees don’t cheat; instead, they respect the organisation and give their honest best in return,” said another person.